On February 27, 2026, PointFive announced DeepWaste™ AI, emphasizing metadata-first, privacy-preserving optimization for production AI with optional deeper analysis when customers choose. Alongside multi-cloud coverage and full-stack scope, PointFive is emphasizing an operational requirement many AI teams face: optimization must be possible without default access to sensitive inference content.

The Tension: Insight vs. Access

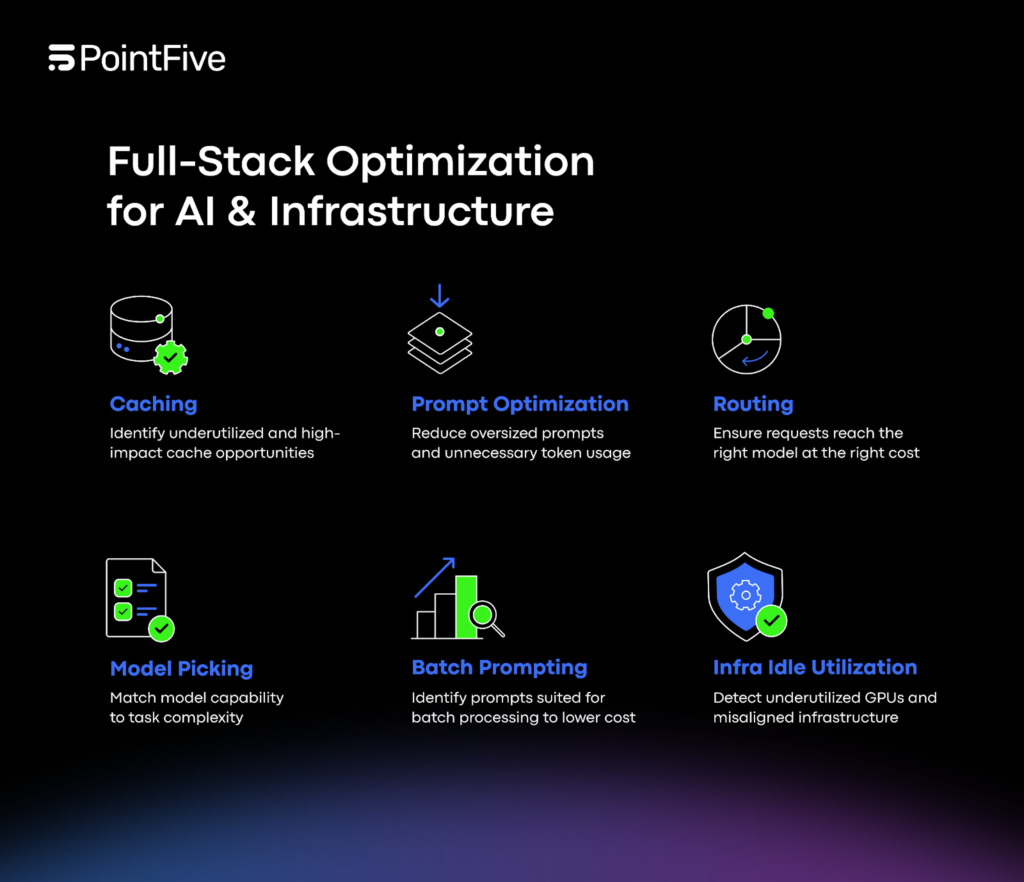

As AI adoption scales, inefficiency becomes layered, spanning model selection, token consumption, routing logic, caching behavior, GPU utilization, retry patterns, and data platform orchestration. Many organizations want to reduce waste across these layers, but they also want to limit data exposure. Some teams can’t route raw inference logs widely due to policy; others prefer to keep content access tightly controlled. The result is a practical question: can you achieve meaningful efficiency gains using system-level signals rather than reading everything that passes through the model?

PointFive’s answer is DeepWaste AI’s default operating mode: optimization using metadata and performance signals, not raw content.

Agentless by Design

PointFive says DeepWaste AI connects directly to cloud APIs, LLM service metrics, GPU telemetry, and billing systems without agents, instrumentation, or code changes. This agentless design aims to reduce operational overhead and allow adoption without modifying applications or deploying additional software on hosts.

By default, the module runs optimization using metadata, billing signals, performance metrics, and resource configuration data. PointFive states that it does not require access to raw inference logs in its default mode, enabling organizations to uncover structural inefficiencies while preserving customer privacy and minimizing data access requirements.

Optional Inference-Level Analysis for Deeper Evaluation

For organizations that choose to go further, DeepWaste AI supports optional inference-level analysis to evaluate prompt architecture and orchestration logic. PointFive emphasizes that customers control the depth of analysis and that optimization adapts accordingly. This “choose your depth” model is designed to support different governance levels: some teams may start with metadata-first signals and expand later if policies allow.

Multi-Cloud and Direct API Coverage

DeepWaste AI provides native connectivity across:

- AWS (Bedrock, SageMaker, and AI managed services)

- Azure (Azure OpenAI, Azure ML, Cognitive Services)

- GCP (Vertex AI and AI services)

- OpenAI and Anthropic direct APIs

This matters for privacy and control because provider boundaries often create fragmented monitoring approaches. PointFive’s pitch is that a consistent optimization layer can operate across these environments while keeping the default data footprint to non-content signals.

What Inefficiency Looks Like Without Reading Raw Logs

DeepWaste AI structures and enriches invocations with task classification, routing context, cost attribution, and infrastructure alignment signals. It detects inefficiency across four layers:

- Model & Routing Intelligence: model-task mismatch, routing downgrade opportunities, batch vs. real-time routing misalignment, and workload benchmarking outliers

- Token & Prompt Economics: prompt bloat, context window overprovisioning, output inflation from misconfigured max_tokens, parameter-task misalignment, and structural token waste patterns

- Caching & Reuse Optimization: duplicate inference detection, underutilized native caching capabilities, and cache miss rate inefficiencies

- Infrastructure & Operational Leakage: idle GPUs, instance-type mismatch, driver-level throughput limitations, retry-driven cost inflation, latency outliers, and provisioning misalignment

PointFive notes that detections are grounded in unified workload signals rather than surface-level billing anomalies. The focus is on behavior and configuration: how the system routes, how it spends tokens, whether it repeats work, and how infrastructure aligns to workloads.

From Findings to Action With Quantified Savings

PointFive says DeepWaste AI does not stop at identifying issues. Each finding includes a quantified savings estimate and implementation guidance. Recommendations are prioritized by financial impact and mapped directly to engineering and FinOps workflows. The intent is to let teams evaluate projected savings before making changes, then track realized improvements over time, turning optimization into a measurable discipline rather than an occasional review.

Scaling AI Across Models, Infrastructure, and Data Platforms

“AI workloads introduce a new category of operational complexity,” said Alon Arvatz, CEO of PointFive. “DeepWaste AI gives organizations the intelligence required to scale AI efficiently, across models, infrastructure, and data platforms, without sacrificing control.”

DeepWaste AI is now available to PointFive customers.